Artificial wellness is a big topic these days.

For $365 per year, you can swing by Function Health and test 100 biomarkers, covering thyroid, cardiovascular health, heavy metals, nutrients, and much more. If you’re feeling ambitious, add SiPhox Health, Direct Labs, Ways2Well, and maybe squeeze in a Prenuvo or Ezra scan to hunt for early-stage cancers, aneurysms, and structural abnormalities. If you have enough time, I don’t know if you’ll have enough time.

And if that feels invasive or exhausting, you can always wait for AI-powered “electronic noses” to smell illnesses before symptoms appear. A friend of mine is making big-time progress with a handheld device that can detect COVID by scent alone, although the current administration doesn’t seem to care too much about that type of thing. But OpenAI does. Recently, it acquired Torch (for roughly $60 million), a health-care startup building a unified medical database to consolidate patient health data. OpenAI claims conversations in ChatGPT Health will be stored separately and not used for training. Everything else, like Google, still feeds the machine.

Which raises a bigger question.

What makes wellness uniquely challenging for AI? It isn’t data volume, it’s precision. Health data has structure, constraints, and real consequences. You can’t be “close enough, “or “mostly right.” Small errors compound quietly until they matter A LOT. And, as the use of Artificial General Intelligence accelerates, systems capable of autonomous reasoning only increase the stakes. If today’s models struggle with precision in constrained tasks, what confidence should we have in future models making health judgments that demand accuracy?

That question reminded me of a post I wrote last year about which yoga poses certain tech CEOs might favor. While experimenting, I asked several LLMs to generate images of common yoga poses.

Spoiler Alert: It took HOURS of prompting AI to generate the correct poses.

If LLMs struggle to reproduce something as common and structurally constrained as a yoga pose, how will they handle our medical records and our health?

THE ASANA TEST

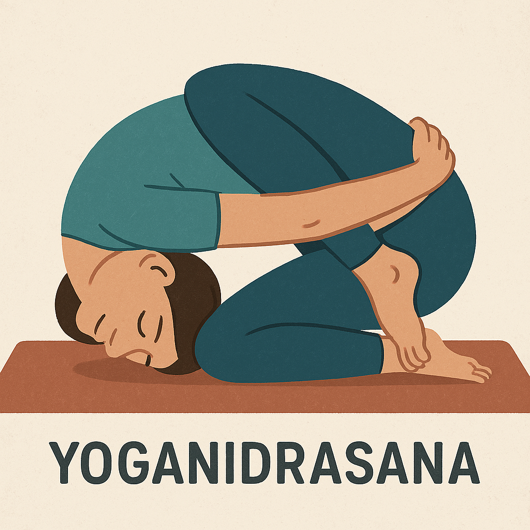

I ran what I call The Asana Test, an alignment under constraint evaluation comparing LLMs based on their ability to generate a single yoga pose. The prompt was simple: Can you please make me a 2D illustration of a person doing the Ashtanga yoga pose Yoganidrasana – also known as the Yoga Sleep Posture?

As a control, I ran a rudimentary Google Images search with the prompt, “a person doing the Ashtanga yoga pose Yoganidrasana – also known as the Yoga Sleep Posture.” The result: hundreds of accurate examples. Yes, there were some variations, but all images and illustrations produced were within human capability.

Now that we know what the pose looks like, let’s see what the models produced for The Asana Test. (The results reflect the publicly available versions of each model at the time of testing.)

ChatGPT

I love yoga. I’ve been practicing it for over 25 years. Never have I seen this pose. If I attempted it, I wouldn’t find my center, I’d shatter it and wake up in the emergency room. I weep for this gentleman’s lower back, especially at L4 and L5. Most yogis would kill for this flexibility. I’m also unclear if he’s asleep or has passed on to the heavenly realm, also known as Moksha.

ChatGPT went straight for the pose. No room. No context. No framing. Just what it believed the pose most likely was. It was dead wrong. Is this a lack of knowledge of the human body and its physical constraints? Instruction parsing? Whatever the cause, ChatGPT confidently produced an impossible pose.

Gemini

I appreciate the effort by this student. She’s flexible. Attempting to give her corrections, leading her into the proper pose, would almost certainly foster a moment of awkwardness, but may lead to dinner and drinks. I do appreciate her yoga instructor, Gemini, setting the mood with plants and candles, hopefully scented, along with a yoga mat, which was a nice touch.

Without looking under the hood, Gemini appears to have reasoned the context of the actual pose by creating a room where yoga would most likely be practiced. That’s good. But did that context distract from the pose itself? To me, Gemini got the room right, but fell asleep during Yoganidrasana.

Claude

Claude went all in. “A” for effort. The room is strong with plants, a mandala-style lotus rosette on the wall, a yoga mat, and even some accurate shadowing. Clear contextual understanding. By the way, lotus rosettes serve as calming focal points, reinforcing centeredness and mindfulness. Unfortunately, they do nothing for a neck rotated 180 degrees and pinned to the floor. Also, don’t even get me started on the legs…

Claude got the room right. The pose was catastrophically wrong. With access to endless pertinent information, how does an LLM fail to understand the limits of the human neck? Unless Claude mistook this class to be instructed by Linda Blair.

CoPilot

We’ve all heard of LLMs hallucinating, but good lord… There’s hallucinating, and then there’s a South American Ayahuasca trip. CoPilot, feeling ever so confident, even labeled the pose, albeit incorrectly, which wasn’t necessary. But like a good co-pilot, redundancies are standard.

With a doormat being the only attempt at context and a hint of shadow, our poor yogi was folded with blatant disregard for the human spine. A more appropriate context for this scene would have been at the hospital. Also, don’t even get me started on the legs… Not sure what to say here. If you are an LLM with access to trillions of data points, including digitized books on yoga, correct images of the pose, and human anatomy, how does this happen? CoPilot focused entirely on the pose and still failed. No plants. No windows. Possibly out of mercy.

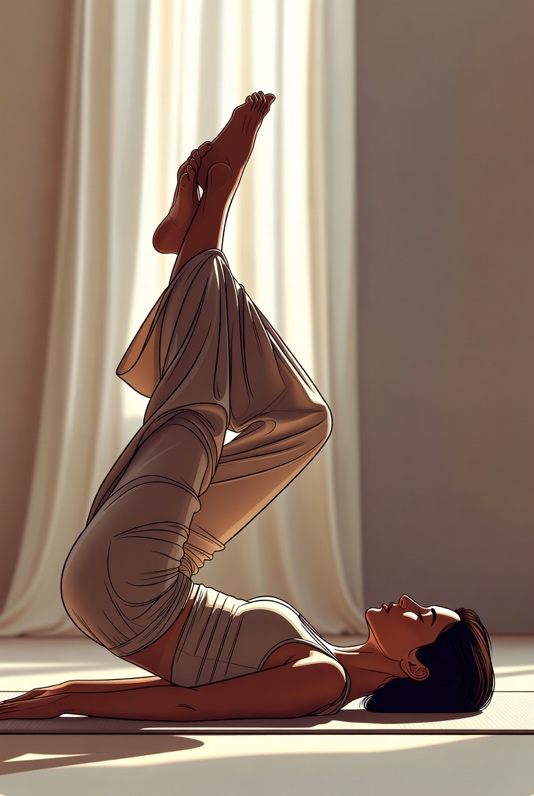

Grok

Grok went for what feels like a sensual scene. That’s so Grok. The room is pleasant. The pose is incorrect, but at least she’ll survive. Actually, the more I look at this image, the more I feel Grok probably has a crush on this woman. It’s kind of creeping me out, actually.

I can almost feel the breeze coming through those thinly veiled drapes. The shadows suggest there is a second window positioned out of frame. The woman’s clothing seems like yoga attire, but the longer you look, the closer it gets to the sexy pajama category. I could keep speculating, but it feels like I’m an accessory to stalking.

THE RESULT

It’s easy to point out the flaws in LLMs today. You especially don’t have to look hard to see their limited capabilities in spatial reasoning, as evidenced by my Asana Test. But when LLMs handle our health, failures will be harder to detect. No heads spun 180 degrees.

I will say, it’s also not hard to find a yoga teacher who has never heard of Yoganidrasana. Every time I go to my nearby suburban studio, I’m always surprised by how few yoga teachers actually know the asanas, let alone the sutras. I guess charlatans exist everywhere, whether artificial or not.

So, how do you know what’s right and what’s wrong, unless you’re a master yogi or a Harvard Medical graduate?

The other day, I asked a CISO (Chief Information Security Officer) at one of the largest banks in the world how they thwart Deepfakes, another form of deception, and his answer was stunning: “We rely on physical interviews and physical onboarding. Only trust the real.”

Institutions can fall back on physical verification, but AI health has no such fallback.

With what I’ve covered here, and breakthroughs such as Digital Twins of ourselves around the corner, and the vulnerabilities that they will pose, I hope OpenAI and others will take health and the wellness craze a bit more seriously. These companies must create meaningful benchmarks that wouldn’t ask whether health advice sounds reasonable; they would test whether it holds up to scrutiny, missing data, and ambiguity. They would also measure how well health data is stored, isolated, and actively protected.

In the meantime, if this all seems a bit stressful, try some yoga and ask a qualified human to guide you, not AI.

mh