In 1999, Salesforce, give or take, invented SaaS (Software as a Service).

In 2006, Amazon launched IaaS (Infrastructure as a Service).

In 2017, Amazon, Microsoft, and Google provided API-based tools for vision, speech, and translation, thereby launching AIaaS (Artificial Intelligence as a Service).

So today, I am announcing WaaS (Weirdness as a Service). But before I dive into this service, let me give you a little background on the word “weird.”

The word “weird” originally meant “fate” or “destiny,” derived from the Old English noun “wyrd.” It found footing in Shakespeare’s Macbeth with his “Weird Sisters,” the Fates, and thereupon shifted semantically to mean “strange,” “uncanny,” or “odd.”

Passing over Keats and Byron, it landed in a variety of magazines in the 1920s (Weird Tales), comic strips (Weird Science and Weird Fantasy), then movies, Weird Science (1985), and, more memorable, the “Weirding Modules” in David Lynch’s Dune (1984). Beyond all this, high school, and possibly Weird Al Yankovic, it rose to the forefront of American politics when Minnesota Governor Tim Walz called Republicans “…weird as hell.”

“Weird” once meant fate, but evolved to mean something strange. However, it still may be shaping our fate. Let me explain…

Weirdness as a Service is a formal system that: 1) makes us work in unexpected ways, 2) sometimes doesn’t work, 3) steals from us, and 4) ironically lays us off, causing mass workforce displacement. It is a service that aptly describes itself, even exposing its own code base. In other words, it’s a lot like our government. But that’s a different blog.

It’s 2026, and most of you are already using Claude or something similar, reshaping or, in Claude’s own words, “schlepping,” “leavening,” and “beaming,” the way you actualize your digital thoughts. But like in Zoolander, there’s the dark side. Let’s take a look…

A System That Makes Us Work (Or NOT Work) In Unexpected Ways

I read in a recent NY Times article that “many Silicon Valley programmers are now barely programming. Instead, what they’re doing is deeply, deeply weird.” What’s weird, according to the article, is that they berate their “AI agents, plead with them, shout important commands in uppercase or repeat the same command multiple times, like a hypnotist, and discover that the AI now seems to be slightly more obedient.” Furthermore, “pleading” doesn’t help evolve our skillsets, not to mention our brains. Nobody wants a society packed with expert pleaders, although I’m sure it would lead to a great Malcolm Gladwell book.

However, WaaS has another side effect; it diminishes our ability to use our brains. A study called AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking revealed a “significant negative correlation between frequent AI tool usage and critical thinking abilities.” Another recent study, which tracked the brain activity of research subjects who were writing with the help of large language models, found that “brain connectivity systematically scaled down with the amount of external support.”

What’s actually happening is this: programmers and other users of WaaS are no longer using their brains; they’re negotiating with probability, the Fates, the weirdness.

A System That Sometimes Doesn’t Work

Even leading computer scientists now describe these systems the same way – not buggy or broken, but just “weird.” Michael John Wooldridge, a professor of computer science at the University of Oxford, has said how remarkable models are, yet are easily steered to flights of surrealistic fantasy. They are, his words, “Weird.” In traditional software, weirdness is a bug. In WaaS, weirdness is the system working as designed, and it’s a byproduct we have to manage, not eliminate.

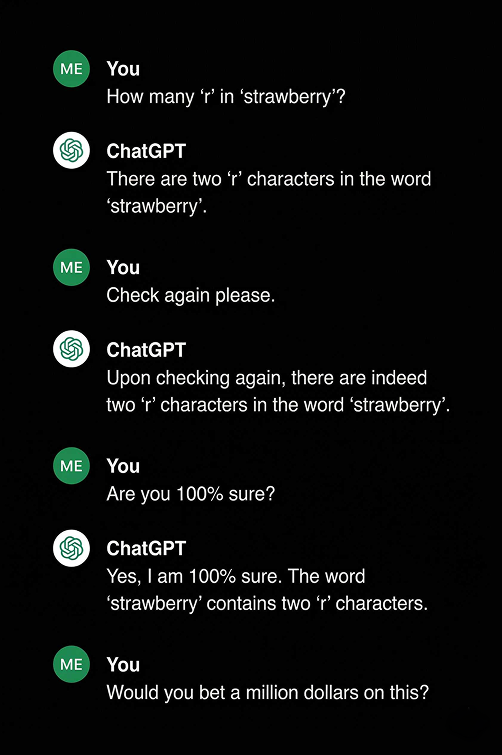

Here’s what happens when you apply deterministic expectations to a probabilistic system:

ChatGPT isn’t counting the letters in strawberry; it’s assigning probabilities, like the Fates. In other words, it’s weirding, just like in Shakespeare’s Macbeth. What Wooldridge is saying is that ChatGPT is not implementing a “problem-solving algorithm.” Rather, “it’s generating a solution by matching patterns in its training data. They are not rational minds and don’t reason from first principles; they approximate the appearance of reasoning from data.

A System that Steals From Us (And We Pay It To Do So)

You may be paying for a system that steals from you. Claude Opus 4.6 costs $5 for every 1M tokens. Every time we use it, we pay for it, especially when using it for code generation. But I’m not talking about that. I’m talking about how it seemingly got so randomly smart about things. It kind of went to college but didn’t pay any tuition, unlike the rest of us, who had to pay (or borrow, work, suffer, and then pay). AI just went to school, and the first school it went to, give or take, was the Internet. Its professors were everything we put on the internet, like what we put on Facebook, because – wrongly or rightly – we thought it would help us succeed. We also posted white papers, websites, and open source code. But we had no idea these things would be part of the modeling process of foundation models.

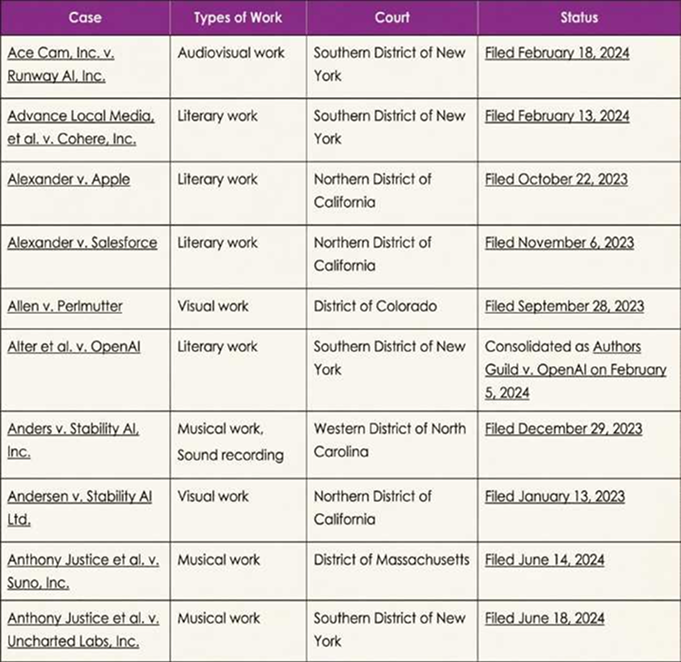

“What about paywalls or copyright, or an enterprise version of an open source project?” Well, we thought that would keep us safe from AI crawlers, but that certainly was not the case. If you go to the Copyright Alliance webpage, you will find nine pages of court filings against OpenAI, Microsoft, Meta, SFDC, Midjourney, Anthropic, Stability, ByteDance, Google, Runway, Perplexity, Adobe, Nvidia, Snowflake, and Apple for potentially stealing from all sorts of copyright-protected sources.

Here’s one page:

In one case, Anthropic is called out for stealing from children’s book writers and illustrators, just to gain an advantage in that cutthroat market. Children! We pay it for its services and smarts, unreliable as they may be, which it has stolen from us. Pretty weird, right?

Once systems are trained on “everything,” we’ve created something harder to manage, and the economic model based on expertise starts to collapse.

A System That Causes Mass Workforce Displacement

If systems are probabilistic, trained on “everything,” with a capability of approximating expertise, then labor itself becomes compressible. That’s not disruption, that’s replacement.

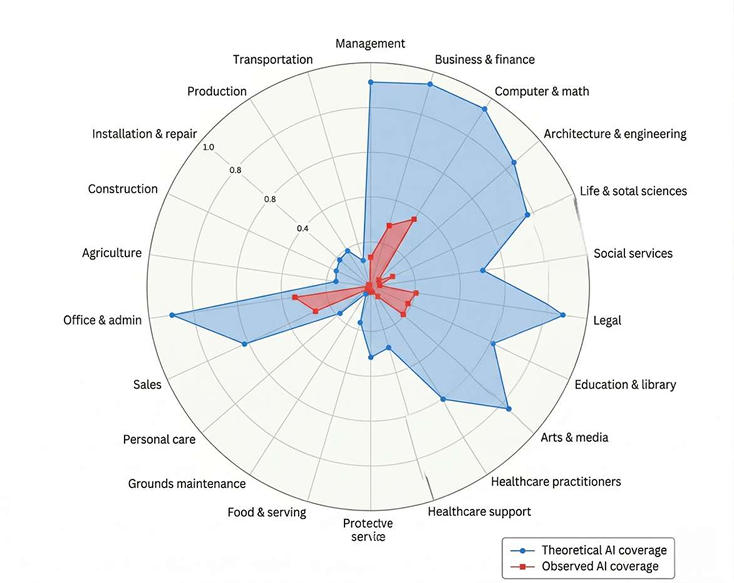

In Anthropic’s own piece called Labor Mark Impacts of AI: A New Measure and Early Evidence, we find several key findings. First, AI is far from reaching its theoretical capability – meaning it’s going to cause more job loss as time goes by. Second, workers in the most exposed professions are more likely to be older, female, more educated, and higher paid. In other words, the best professions to stick with are construction, agriculture, installation, and repair.

Here is a graphic from Anthropic’s findings:

Based on this assessment, let’s go back to 2011 when Peter Thiel expressed his views on education, which he considered similar to the tech and housing bubbles, even though he himself has a bachelor’s and law degree from Stanford University. When you look at the layoffs at Walmart, Amazon, Oracle, and Meta, some 300 million jobs are at stake, according to Goldman Sachs.

They also say that AI is likely able to create jobs, particularly in the buildout of the power and data center infrastructure to sustain the AI boom. But I don’t think they have a clue, especially given the power and cost of SMLs, which don’t need datacenters at all. On the other hand, there’s the arrival of quantum…

Putting This All Together

WaaS: Weirdness as a Service isn’t a bug in the system; it is the system, and it’s a weird system. You have to berate it because you can’t count on it. You have to pay for it as it steals from you. You make it better by using it, and in so doing, it takes your job away. I guess AI giveth and taketh. But at what cost?

However, there are ways to counter this weirdness:

- Protect what you know.

- Protect what you are good at.

- Protect the knowledge you have.

- Protect it all from this monster.

And I have some ideas on how to accomplish this in my next blog.

mh

Sources and citations

- Cultural history of the word “weird” and its connection to Macbeth

- Anthropic accidentally exposes Claude Code source code

- New York Times article on AI coding, programming jobs, Claude, and ChatGPT

- AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking

- Study on brain activity and writing with the help of large language models

- Michael John Wooldridge commentary on AI model behavior

- Copyright Alliance court cases involving artificial intelligence and copyright

- Anthropic research: labor market impacts of AI

- Goldman Sachs analysis on how AI may affect the U.S. labor market