Protegrity & Kafka

See Kafka in Action

View DemoProtegrity Non-Native

Built and supported by Protegrity, this non-native Kafka integration protects data at the stream edge—via producers/consumers or a proxy—without modifying Kafka brokers.

Integration type

- Non-Native

- Streaming

Partner

Yes

Supported platforms

- AWS

- Azure

- GCP

Use cases

- Cloud Migration & SaaS Integration

- Internal Data Democratization & External Data Sharing With Partners/Vendors

- Privacy-enhanced Training Data for AI/ML Models

- Regulatory Compliance & Data Sovereignty

overview

Protegrity’s integration with Apache Kafka enables real-time protection of sensitive data in motion. By applying field-level tokenization and encryption at the point of ingestion, organizations can stream and analyze data securely without compromising privacy or compliance. This integration supports high-throughput analytics, AI, and operational use cases across industries, ensuring persistent protection throughout the Kafka pipeline.

Key Integration Feature

Protegrity for Kafka offers organizations robust protection for sensitive data by automatically tokenizing or encrypting information as soon as it enters the Kafka pipeline. This real-time security measure enables businesses to confidently leverage streaming data for analytics, AI, and operational processes without risking exposure of personal or regulated information. The solution is designed for seamless integration, requiring minimal changes to existing workflows and preserving Kafka’s high performance. As a result, companies can maintain compliance with privacy regulations, prevent data breaches, and support innovation while safeguarding critical data throughout the entire streaming lifecycle.

Features & Capabilities

Explore the core capabilities for protecting Kafka streams—field-level controls, centralized governance, and support for analytics and AI workloads without disrupting throughput.

01

End-to-End Streaming Data Protection

Why It Matters

Kafka is widely used to transport sensitive data, including regulated information such as PII, PHI, and financial records, across real-time analytics and operational pipelines. Protecting this data in motion is critical for meeting compliance requirements (e.g., GDPR, HIPAA, PCI DSS) and preventing data breaches that could result in reputational and financial damage. Ensuring persistent protection throughout the Kafka pipeline enables organizations to confidently leverage streaming architectures for advanced analytics and AI without exposing sensitive information.

How it Works

Protegrity applies field-level tokenization and encryption before data enters Kafka, ensuring that sensitive information remains protected even if intercepted during transit. This persistent protection means that, even if unauthorized access occurs within the streaming pipeline, the data remains unintelligible and secure, supporting zero-trust architectures and robust data governance.

02

Seamless Integration with Minimal Code Changes

Why It Matters

Security solutions must integrate smoothly into existing data pipelines without causing development bottlenecks or requiring major architectural changes. By minimizing code changes, organizations can accelerate deployment, reduce operational risk, and maintain business agility while ensuring data protection is consistently applied across all Kafka workloads.

How it Works

Protegrity offers integration via REST API, Java SDK, or the Kafka REST Proxy, allowing developers to implement data protection with just a few lines of code. This flexibility supports a wide range of deployment scenarios, from legacy on-premises clusters to modern cloud-native Kafka services, ensuring rapid adoption and consistent security controls.

03

Centralized Policy Management & Compliance

Why It Matters

Unified, centralized policy management streamlines governance, reduces administrative overhead, and ensures that data protection policies are enforced consistently across all Kafka environments. This is essential for passing audits, demonstrating regulatory compliance, and maintaining a strong security posture in complex, multi-cloud or hybrid infrastructures.

How it Works

Policies are defined and managed centrally in the Protegrity Enterprise Security Administrator (ESA) and are automatically applied to all protected data streams. Every protection action is logged for audit and compliance purposes, providing a comprehensive record for regulators and internal stakeholders, and enabling rapid incident response if anomalies are detected.

04

Analytics & AI Empowerment: Insights without compromise

Why It Matters

Protected data can still be analyzed, visualized, and used to train AI/ML models—maximizing business value while minimizing compliance risk.

How it Works

A retail enterprise combines tokenized sales and customer data from multiple systems through Denodo to build advanced recommendation models without PCI violations.

05

Broad Ecosystem Support

Why It Matters

Kafka is deployed across a diverse range of environments—including on-premises, hybrid, and multi-cloud—so data protection must be consistent regardless of infrastructure. Supporting all major managed and self-managed Kafka platforms ensures organizations can scale securely and maintain compliance as their data landscape evolves.

How it Works

Protegrity enables seamless protection for Apache Kafka in any environment, including Cloudera, AWS MSK, Azure Event Hubs, Google Cloud Platform, and Confluent. The solution supports both self-managed and managed deployments, ensuring that data protection policies travel with the data, regardless of where Kafka is running or how it is managed.

Architecture &

Sample Data Flow

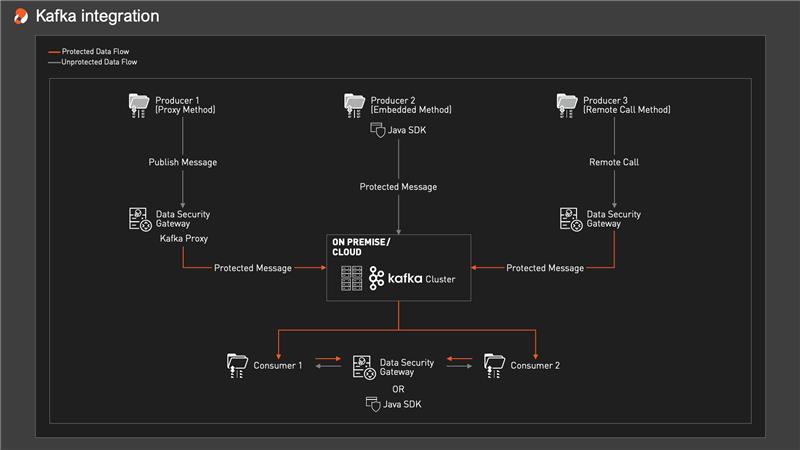

The Kafka + Protegrity integration architecture is designed to insert data protection into the streaming data pipeline in a way that’s transparent to Kafka itself. Rather than modifying the Kafka brokers, Protegrity focuses on the edges of the stream – the producers and consumers – and/or on a proxy layer, to apply protection as data enters and leaves Kafka. The core components typically include:

The data journey

Visualizing the data journey

The data journey

The data journey explained

-

01

Ingestion

When data such as customer orders enters the pipeline via Kafka producers, Protegrity integration identifies sensitive fields like email and credit card numbers, sending them for tokenization or encryption before the message is published to Kafka. As a result, raw sensitive data is protected immediately and never enters Kafka in clear text.

-

02

Transformation

Protected data flows through Kafka topics and stream processing stages, such as Kafka Streams or ksqlDB, with sensitive fields tokenized but formats and referential integrity preserved, so analytic operations like joining or grouping still work securely. This approach enables analytics and enrichment on protected data, ensuring privacy without sacrificing functionality, and authorized users can reverse the protection when needed.

-

03

Data Delivery

Processed, protected messages are delivered to downstream consumers such as databases, data lakes, dashboards, or microservices, with Protegrity controlling access to unprotected data only for authorized consumers. This flexible approach ensures that sensitive information remains protected unless specific privileges and policy allow its exposure, upholding the principle of least privilege at every point of data delivery.

-

04

Monitoring & Logging

Protegrity’s platform logs every protection and unprotection operation in the Kafka pipeline for auditing and compliance, while automated health checks and alerts ensure any issues are detected and sensitive data is never left unprotected. Security administrators can centrally view all protection events, providing confidence that the integration is operating securely and as required.

Use Cases

See how teams use Protegrity + Kafka to protect streaming data for analytics, GenAI, and operational systems—without slowing throughput or breaking downstream workflows.

Finance

Securing Real-Time Transactions for Fraud & Risk Scoring

Challenge

A global bank must detect fraud in real time by streaming sensitive transaction data through Kafka, while complying with PCI DSS and GDPR. Batch encryption is too slow, and a breach could expose millions of records.

Solution

The bank uses Protegrity for instant tokenization and encryption of sensitive data within Kafka streams. Card numbers are tokenized, personal identifiers masked, allowing fraud detection models access to consistent tokens without revealing actual details. Only authorized analysts can view original data when necessary.

Result

The bank achieves rapid fraud monitoring and reduces breach risks, maintaining compliance since real card data isn’t visible in Kafka. Fraud detection speed improves by 35%, and tokenized data enables secure collaboration with third-party AI services for risk assessment.

Healthcare Payers

Protecting Member & Claims Data Across Streaming Workflows

Challenge

Hospitals and healthcare providers stream sensitive patient data—like real-time vitals, EHR updates, and lab results—to central analytics systems. Since this information includes PHI protected by HIPAA, streaming it without safeguards risks privacy breaches.

Solution

By integrating Protegrity with Kafka, organizations can build a HIPAA-compliant pipeline. PHI is automatically tokenized as data enters Kafka from clinics or devices, replacing identifiers like names or Social Security Numbers with tokens. Analytics teams can use this data for trend analysis without accessing identifying details, and authorized applications can detokenize when necessary, with audit trails.

Result

Providers monitor and analyze patient data in real time—improving outcomes while maintaining privacy and regulatory compliance. For example, one hospital used tokenized IoT heart monitor feeds via Kafka to detect arrhythmias, cut response times by 40%, and securely transmit data over the cloud with no PHI exposed.

Retail

Subtitle here

Challenge

A major retailer uses Kafka to process real-time sales, inventory, and customer data across stores and online. This data includes sensitive PII and sometimes payment info, creating privacy risks under regulations like GDPR and CCPA. The retailer needs to protect customer data while still supporting personalized marketing and transaction reconciliation.

Solution

With Protegrity’s Kafka integration, customer identifiers are tokenized at data capture—emails and names are turned into consistent tokens, preserving format and usability for analytics and personalization. Transaction details remain visible for operational use, but only authorized systems can detokenize for marketing outreach.

Result

The retailer runs a secure, real-time data pipeline that enables omnichannel insights and personalization without exposing actual PII. Unauthorized access sees only tokens, minimizing breach risk and regulatory exposure, while business performance improves with faster inventory optimization and more targeted marketing.

DEPLOYMENT

Deploy Protegrity with Kafka without touching broker internals. Protect sensitive fields at the edges of the stream (producers/consumers) or via a gateway/proxy, then manage policies centrally for consistent enforcement across environments.

On-Prem

Cloud/Managed Kakfa (AWS, Azure, etc.)

For broader platform integration

Enterprise Deployment Pattern:

Scalable and Secure Architecture:

RESOURCES

Quick reads and implementation guides to help architects and developers protect Kafka events end-to-end—covering policy setup, producer/consumer patterns, and cloud deployment options.

Docs Center

Implementation patterns, deployment guidance, and policy configuration for protecting Kafka messages in motion—tokenization, encryption, masking, audit logs, and access controls.

READ MOREProtegrity Developer Edition GitHub

Clone sample code and test protection in your own Kafka workflows. Validate tokenization/masking behavior and policy outcomes before scaling to Team or Enterprise.

READ MOREFrequently

Asked Questions

Protegrity integrates flexibly with Kafka at the producer/consumer level, not by modifying Kafka itself. Integration options include calling Protegrity’s REST API, embedding their Java SDK in application code, or using Kafka Connect transforms/interceptors. No changes are required on the broker side, and Protegrity provides examples to streamline implementation.

Performance impact is minimal: SDK integration is fastest, while REST API adds minor latency (milliseconds or microseconds), mitigated by local deployment and concurrency. Protegrity scales to handle large workloads, as proven by enterprise benchmarks. Format-preserving tokenization avoids message bloat and maintains downstream compatibility.

Yes. Protegrity works with any Kafka deployment—on-premises, managed services, or cloud—since it operates at the client/application layer. Example architectures exist for AWS and Azure, and customers successfully use Protegrity with cloud-native pipelines, containers, or CI/CD. Compatibility is maintained across Kafka versions by avoiding broker plugins.

Protegrity supports protecting any identifiable field in formats like JSON, AVRO, XML, plain text, or binary payloads. Field-level protection applies to various data types including strings, numbers, dates, and partial fields. Policies define which parts of the message are protected, making the solution adaptable to most use cases, from selective masking to full payload encryption.

Authorized consumers unprotect data through Protegrity’s APIs or SDKs based on role-based access control. Unprotection occurs only when explicitly permitted and is audited. Many analytics use tokenized values, but cleartext is retrieved securely when necessary. Kafka itself does not perform decryption.

See the

Protegrity

platform

in action

Accelerate data access and turn data security into a competitive advantage with Protegrity’s uniquely data-centric approach to data protection.

Get an online or custom live demo.