Don’t Let Data Exposure Derail

Your AI Projects

AI Can’t Scale

Without Data Integrity

AI teams are under pressure to move fast, but once sensitive data enters AI workflows, risk compounds quickly. LLMs, RAG pipelines, and agentic systems can leak data, drift off-policy, or produce results that can’t be validated or audited.

The result: stalled projects, repeated security reviews, rising costs, and growing skepticism from leadership. These aren’t model problems – they are data integrity problems.

Data Integrity Requires a Secure Foundation

AI systems require more than observability, prompts, or model-level controls. True AI integrity is established when data meaning, policy, and protection are enforced directly at the data layer, independent of applications or model behavior.

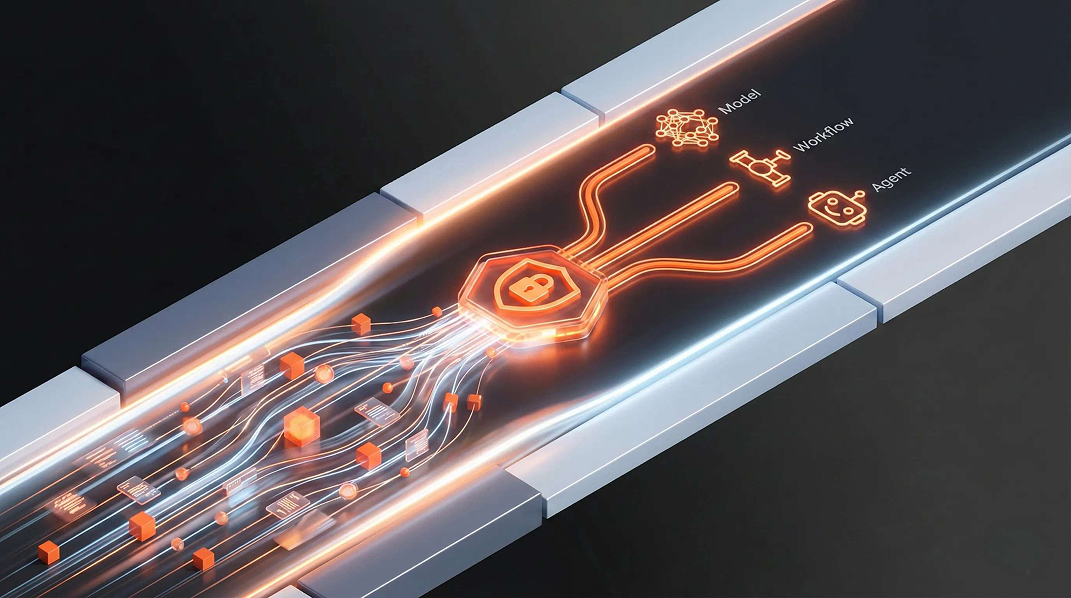

Classify Data Before AI Uses It

Identify and classify sensitive data before it enters AI workflows, so models and agents reason over governed data with known risks and constraints.

Keep Data Usable for AI

Maintain data structure, meaning, and governed relationships so AI systems can reason accurately without exposing sensitive information or losing intent.

Enforce Policies Across AI Workflows

Define policies once and enforce them consistently and deterministically across AI workflows without relying on prompts, hard-code rules or manual review.

Protect Data Without Limiting Its Use

Transform sensitive data to preserve utility while preventing exposure of underlying values, enabling safe sharing and use across AI workflows.

Control AI at Runtime

Evaluate and enforce AI interactions in real time using semantic understanding to prevent data leakage, off-policy behavior, and unintended use.